By Mike Phillips

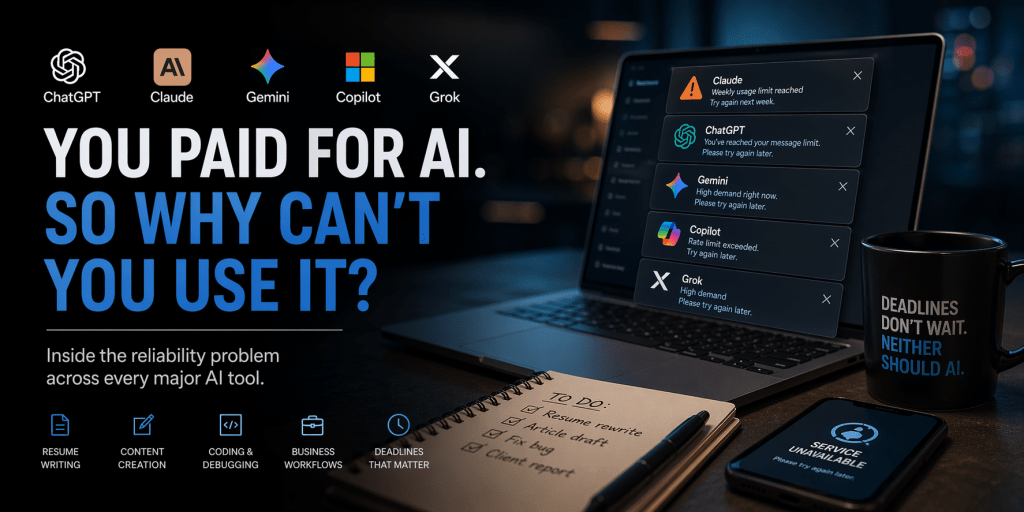

There is a pattern emerging across the major AI platforms, and it’s not tied to any one company.

A user sits down to work. Maybe it’s something simple—rewriting a resume, tightening language for a job application, iterating through a few drafts to get the tone right. Or maybe it’s more involved: an article on deadline, a block of code that won’t compile, a deliverable that has to go out before the end of the day.

They open the tool they pay for. They start working.

And then, somewhere in the middle of the process, it stops.

A usage limit appears. The session resets. The system slows down or returns a “high demand” message. The work doesn’t just pause—it breaks.

This is happening across platforms, whether the tool is built by OpenAI, Anthropic, Google, Microsoft, or xAI. The interfaces are different. The models are different. The problem is the same.

You can pay for access and still find yourself locked out when you need it.

The Resume Problem

Resume writing is a good place to start because it’s both common and time-sensitive.

Most people don’t approach it casually. They’re applying for something. They’re trying to translate years of experience into a few lines that will survive automated filters and stand out to a hiring manager. It’s iterative work—write, refine, adjust, repeat.

AI tools are well suited for that. They accelerate the process. They make it easier to test phrasing, restructure experience, and sharpen impact.

Until the moment they interrupt it.

You’re halfway through refining your experience section. You’ve finally found the right framing. Then the system cuts you off. A limit is reached. The session ends. Context disappears.

The problem isn’t just that the tool stops working. It’s that it stops at the exact moment continuity matters most.

Deadlines Don’t Wait

The same pattern shows up in writing and journalism.

A draft is taking shape. Sections are being rewritten. Tone is being adjusted. The tool is part of the workflow—not replacing the writer, but accelerating the process.

Then access degrades. Responses slow. A cap is hit.

There’s no clean stopping point. No natural break. Just a system constraint imposed mid-process, forcing the work to either pause or move somewhere else without the same context.

For work tied to deadlines, that’s not a minor inconvenience. It’s a disruption.

When the Code Stops Mid-Thought

Developers run into a different version of the same problem.

Debugging with AI is iterative by nature. You test something, refine it, ask follow-up questions, adjust again. The value comes from continuity—the ability to build on what was just analyzed.

When access is interrupted, that continuity disappears. The system doesn’t just stop answering questions. It breaks the chain of reasoning that got you there.

What was supposed to be a faster path becomes fragmented, forcing a reset that erases the advantage.

Business Workflows, Interrupted

Inside companies, the stakes are different, but the pattern holds.

AI is being integrated into documentation, internal tools, customer support workflows, and analysis pipelines. It’s no longer an experiment in many environments—it’s part of how work gets done.

When those systems slow down or become unavailable, teams don’t just wait. They revert. Processes stall. People fall back to manual work that the system was supposed to replace.

The promise of efficiency turns into a question of reliability.

The Same Pattern, Different Platforms

The experience varies slightly depending on the platform, but the underlying behavior is consistent.

ChatGPT, from OpenAI, tends to enforce message or model-based limits, sometimes slowing under load. Claude, from Anthropic, is more likely to impose hard stops tied to session or usage caps that aren’t clearly defined. Gemini, developed by Google, often throttles performance without much explanation. Copilot, from Microsoft, reflects the constraints of the broader Microsoft ecosystem. Grok, built by xAI, can simply deny access when demand spikes.

Different systems, same outcome: access is conditional, and the conditions aren’t fully visible.

What You’re Actually Paying For

The mismatch comes from expectation.

Users think they are paying for a tool they can use when needed. What they are actually buying is priority within a shared system.

That system is governed by constraints that are mostly invisible. Usage isn’t just about how many messages you send. It’s about how much data you process, how complex the requests are, how long the session runs, and how many other users are active at the same time.

The limits shift because the system is constantly balancing those factors.

From the outside, it feels inconsistent. From the inside, it’s controlled scarcity.

The Reliability Gap

What’s emerging is a gap between how these tools are positioned and how they behave under pressure.

They are marketed as productivity tools—faster, smarter, more capable. They are priced in tiers that imply increasing levels of access.

But none of them guarantee availability when demand spikes.

There are no meaningful service guarantees for individual users. No clear, standardized explanation of how limits are calculated in real time. No consistent expectations across platforms.

The result is a system that works well—until it doesn’t—and gives the user little insight into why.

The Bottom Line

AI tools are powerful enough to become part of how people work.

They are not yet reliable enough to be treated like infrastructure.

That tension shows up in small, practical ways: a resume that can’t be finished in one sitting, a draft interrupted mid-edit, a debugging session cut short, a workflow that stalls when access disappears.

You can pay for access.

You just can’t always count on it being there when you need it.

Leave a comment