Companies are investing heavily in AI tools to improve operations. Many are finding the results underwhelming. The problem usually isn’t the technology.

By Mike Phillips

There is a pattern emerging inside organizations that have moved quickly to adopt AI tools. They implement a capable platform — a large language model for internal knowledge retrieval, an AI assistant for workflow automation, a documentation generator — and they wait for productivity to improve. Often, it doesn’t. Sometimes things get measurably worse.

The diagnosis companies reach is usually the same: the AI isn’t smart enough, the model needs tuning, or the rollout was handled poorly. These can all be true. But they often aren’t the real problem.

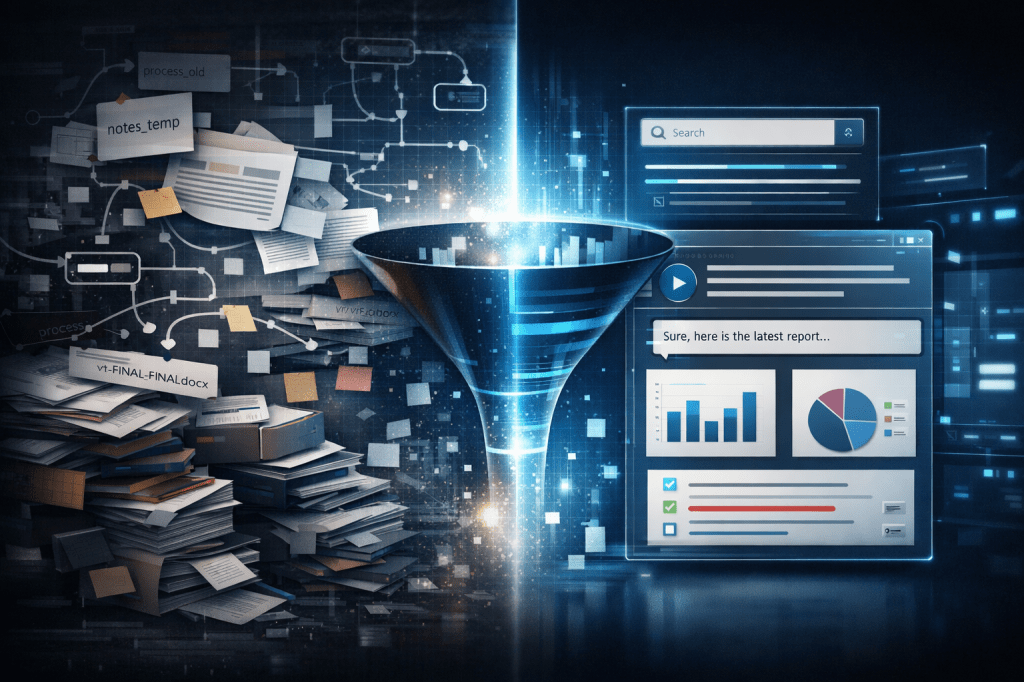

The real problem is that the organization fed a sophisticated system a diet of disorganized information and expected it to produce clarity. That’s not a technology failure. It’s a systems failure that was present long before anyone opened an AI contract.

Garbage in, garbage out — at scale

The phrase “garbage in, garbage out” predates machine learning by decades, but it has never been more consequential than it is now. When a team uses an AI tool to search internal documentation, answer customer questions, or surface relevant processes, the quality of every output is directly constrained by the quality of what’s underneath it.

If your documentation is fragmented across twelve drives, half-updated, and written by six people who left the company, an AI retrieval system will confidently surface the wrong answer faster than a human ever could. The speed of the technology amplifies the dysfunction of the underlying system; it doesn’t correct it.

The speed of the technology amplifies the dysfunction of the underlying system — it doesn’t correct it.

This is the structural problem that most AI implementation guides skip past. They focus on prompt engineering, model selection, and integration architecture. These are legitimate concerns. But they treat the information environment as a given rather than as a variable that needs to be addressed first.

The workflow problem is older than AI

Before AI entered the picture, most organizations already had a workflow problem they hadn’t fully solved. Processes existed in people’s heads rather than in written form. Onboarding relied on institutional memory rather than documented systems. Decisions were made consistently by experienced employees and inconsistently by everyone else.

This was manageable, up to a point. Teams found workarounds. Tribal knowledge passed informally. Errors got caught downstream. The cost was real but diffuse enough that it rarely became a crisis.

AI changes that calculus. When you automate an undocumented process, you don’t systematize it — you embed its inconsistencies at machine speed. When you use an AI to answer employee questions about internal policy, and that policy exists in three contradictory versions in three different places, your AI doesn’t resolve the contradiction. It picks one version, or worse, synthesizes a plausible-sounding hybrid that doesn’t reflect reality anywhere.

Structure is the prerequisite

The organizations getting the most out of AI tools right now share a common characteristic: they invested in information architecture before or alongside their AI rollout. They audited their documentation. They standardized their process formats. They identified which workflows were actually repeatable and which ones only appeared to be. They created systems that were usable by humans first, and then found that those systems were also legible to AI.

This isn’t a new insight in information management circles. But it runs against the cultural instinct to treat AI as a shortcut past the hard organizational work. It isn’t. It’s a multiplier — and multipliers work in both directions.

The teams that will extract the most from this generation of AI tools are the ones that treat documentation and process design as a genuine technical discipline, not an afterthought. The structural work — organizing scattered information, mapping repeatable workflows, building systems that are clear and consistent — is not a precursor to the real work. It is the real work.

Companies that are planning AI implementations, or trying to understand why current ones are underperforming, are often looking in the wrong direction. The question isn’t which model to use. It’s whether the information environment is ready for any model at all.

| Work with Mike Phillips Tech |

|---|

| Mike Phillips Tech helps organizations turn disorganized documentation and informal processes into structured, usable systems — the kind that actually perform when AI tools are layered on top. Services include documentation structuring, workflow design, and complex problem breakdown. |

| Get in touch at mikephillips.tech ↗ |

Leave a comment